Processor Whispers: Of hUMA and humour

by Andreas Stiller

by Andreas Stiller

On the occasion of the ten-year Opteron anniversary, AMD was able to present not only a better balance sheet than last year, but also the new shared memory architecture for CPU and GPU called hUMA.

It's 5 October 1999 and at the Microprocessor Forum in the Fairmont Hotel in San Jose, a total of five gigantic chips are being announced. Intel developer Harsh Sharangpani kicks it off with the first Itanium processor, named Merced. Jim Kahle, chief architect back then, IBM Fellow today, presents the Power4. Joel Emer – Intel Fellow now, employed at Compaq then, which had just swallowed up DEC – impresses the audience with the features of the planned Alpha EV8. Michael Shebanov of HAL Computer Systems continues the show of giants with the SPARC64V.

The highlight, however, comes at the end: to everyone's amazement, Fred Weber – AMD's chief architect at that time, consultant at Samsung in the present – presents the Sledge Hammer, the first true 64-bit processor for x86. The previous announcement had only mentioned "a processor of the Athlon family for workstations and servers" – hardly anyone had something similar on their roadmap or had expected something like that. Especially not Intel's developers. Their Itanium presentation just before that paled in comparison.

![]() At the Microprocessor Forum 2001, Fred Weber revealed concrete details and first performance figures for the planned Opteron processor.

Besides a very cleverly embedded 64-bit extension, the AMD64 architecture also included an integrated memory controller, fast serial links called Lightning Data Transport (LDT), and a new FPU. Two years later, when Fred Weber announced concrete details about the first chip at the same event, I was sitting right next to some of Intel's developers and was able to literally feel their agitation when Weber boasted the planned benchmark results for SPEC2000. By then, LDT had been renamed to HyperTransport and their own, new and basically better FPU had meanwhile been sacrificed in favour of Intel's SSE, apart from that, everything was going according to plan. From then, it still took one and a half years until the Opteron K8 was officially introduced on April 22 2003 – and about ten years until the application of 64-bit really became widespread.

At the Microprocessor Forum 2001, Fred Weber revealed concrete details and first performance figures for the planned Opteron processor.

Besides a very cleverly embedded 64-bit extension, the AMD64 architecture also included an integrated memory controller, fast serial links called Lightning Data Transport (LDT), and a new FPU. Two years later, when Fred Weber announced concrete details about the first chip at the same event, I was sitting right next to some of Intel's developers and was able to literally feel their agitation when Weber boasted the planned benchmark results for SPEC2000. By then, LDT had been renamed to HyperTransport and their own, new and basically better FPU had meanwhile been sacrificed in favour of Intel's SSE, apart from that, everything was going according to plan. From then, it still took one and a half years until the Opteron K8 was officially introduced on April 22 2003 – and about ten years until the application of 64-bit really became widespread.

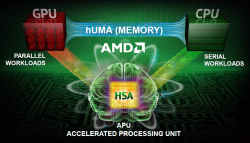

On the occasion of the ten-year anniversary of the Opteron, next to a "certain amount of consolidation" – only $146 million of loss in contrast to $590 million in the year-ago quarter – AMD wanted to announce something trendsetting again: hUMA, heterogenous uniform memory access, the new memory architecture for HSA, whose name sounds like "humour". But whether AMD will again be able to play the role of the pioneer here is still up in the air – Intel is close already with the integrated graphics chips in Ivy Bridge including OpenCL support and, with Haswell, might even offer a shared memory model before AMD.

There is talk of Haswell versions with 128MB of embedded DRAM as a kind of L4 cache, which can be accessed by both GPU and CPU. Details about cache coherence, page tables and programming models are still missing though. As for AMD's hUMA, it's known that the cache coherence of CPU and GPU cores will be guaranteed by the hardware. So-called probe filters are supposed to limit the increased protocol overhead for snooping all accesses among the caches, the drawback of cache coherence, which is also presumed to improve energy efficiency.

Plus, locking pages in memory that are used by the GPU becomes obsolete – they can be integrated into the standard virtual memory management of the operating system or the hypervisor. For programmers, things become much more relaxed with hUMA, they won't have to move data back and forth between two memory areas any more, nor keep track of which memory type currently holds the data. Conventional libraries, like BLAS, can thus be used efficiently without requiring any changes. Larger amounts of data can comfortably be moved to the GPU through paging.

Gamasutra secrets

If the hUMA chips use DDR3 or GDDR5 memory depends on whether they are supposed to work with a focus on CPU or GPU/graphics. Kaveri, the first AMD chip with hUMA and Steamroller cores, which is scheduled for the second half of this year, is said to support both kinds of memory. If Kaveri's fast GDDR5 SDRAM will be upgradable with plugin modules, is another question – in any case, GDDR5 SO-DIMMs are in the works at the semiconductor engineering standardisation body JEDEC.

![]() With hUMA, GPU and CPU can fine-grainedly access shared data without having to copy it back and forth.

With hUMA, GPU and CPU can fine-grainedly access shared data without having to copy it back and forth.

Source: AMD

How well the simultaneous processing of computing and graphics with hUMA will work in the end, remains to be seen. The question is, why has Sony come up with a proprietary solution for the interaction of the eight Jaguar cores and the Radeon graphics in the PS4 SoC it developed together with AMD instead of relying on Kaveri with hUMA? Just recently, Sony's chief architect, Mark Cerny, revealed some details about the shared memory model of the PS4 SoC to the web site Gamasutra. Instead of hUMA, they call the model handling the PS4's 8GB of GDDR5 memory "supercharged". Cerny explained that the PS4 GPU can directly access the system memory via a special bus, bypassing its L1 and L2 caches. Additionally, the L2 cache received a new tag bit, "volatile", which is supposed to optimise the interaction between computing and graphics processes. Also, at Sony's request, the number of computing tasks that can be held in the queues was raised from two, as in the current Radeon, to 64.

Well, it will be interesting to see which memory model Microsoft has chosen for its next Xbox, which will also rely on AMD Jaguar and Radeon. The Redmonders intend to reveal more details about their next game console, which is apparently called "Xbox Infinity" now, via a livestream on 21 May.

(djwm)

![Kernel Log: Coming in 3.10 (Part 3) [--] Infrastructure](/imgs/43/1/0/4/2/6/7/2/comingin310_4_kicker-4977194bfb0de0d7.png)

![Kernel Log: Coming in 3.10 (Part 3) [--] Infrastructure](/imgs/43/1/0/4/2/3/2/3/comingin310_3_kicker-151cd7b9e9660f05.png)