Benchmark structure

We will look at a scenario involving 2,000,000 documents, each of which is associated with a maximum of three users and tags. A task is computed directly when it is composed of a maximum of 10,000 documents. Otherwise it is split into two new tasks each consisting of half the documents.

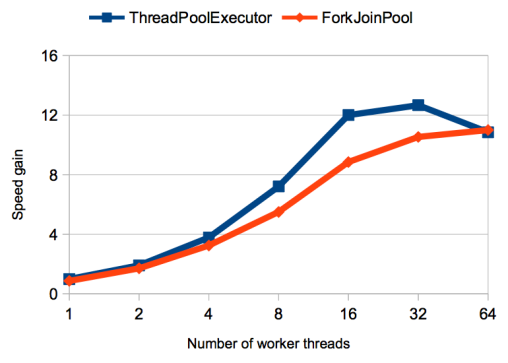

Benchmark performance results are shown below in terms of the speed gain achieved for a range of different worker thread counts (see figures 2, 3 and 6). The figures are determined from the running time of the benchmarks using the ThreadPoolExecutor or the ForkJoinPool compared to the running time of a sequential computation using a single thread (with no pool). Each of these running times is in turn determined from the mean of 20 runs of the same scenario.

To minimise the effect of the just-in-time compiler, ten warm-up runs are performed in the same JVM prior to each benchmark. By using a fixed heap size of 4GB, the share of total running time taken up by garbage collection could be kept well under 5 per cent. All benchmarks were run on a computer containing 32 virtual processors (in the form of 16 physical cores plus hyper-threading).

Analysing the results

As can be seen from figure 2, both the ThreadPoolExecutor and the ForkJoinPool accelerate computation compared to sequential computation. Up to the number of available processors, the ThreadPoolExecutor achieves a noticeably higher speed gain. This is exactly the sort of result which often mystifies developers – the scenario used would appear to be well suited to fork/join.

![]() Up to the number of available processors, the ThreadPoolExecutor achieves a higher speed gain than the ForkJoinPool (figure 2).

Up to the number of available processors, the ThreadPoolExecutor achieves a higher speed gain than the ForkJoinPool (figure 2).

The explanation for this is that all input tasks entail a similar computing load. The ForkJoinPool's recursive splitting and work stealing are therefore unnecessary and, compared to the ThreadPoolExecutor, merely result in increased overhead for generating additional tasks. Although MapReduce is eminently suitable for using a ForkJoinPool, that doesn't necessarily mean that it's always the best solution. If a problem can be reliably partitioned into equally-sized parts, the ThreadPoolExecutor (or even explicitly starting and joining specific threads) is often preferable.

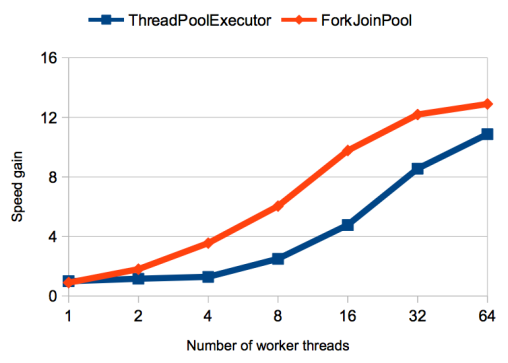

In practice, however, you can't necessarily rely on the workload being evenly split across input tasks. Some documents may be shared by many more users, for example, or have many more tags than others. A second analysis of our example will therefore explore a scenario in which a quarter of all documents are associated with up to four times as many users and tags.

![]() With an unevenly distributed workload, the ForkJoinPool again achieves good results, while the ThreadPoolExecutor suffers under the uneven distribution (figure 3).

With an unevenly distributed workload, the ForkJoinPool again achieves good results, while the ThreadPoolExecutor suffers under the uneven distribution (figure 3).

As can be seen in figure 3, the rapidity with which the ForkJoinPool carries out the computation is unchanged, in fact the speed gain is a little higher, as the total workload has risen. The ThreadPoolExecutor, by contrast, suffers significantly from the imbalance introduced. This is because the ForkJoinPool uses work stealing to automatically balance the load between threads, while the ThreadPoolExecutor has no mechanism for load balancing.

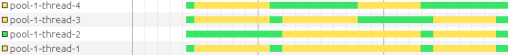

To illustrate this, figure 4 shows the state of the worker threads while computing the benchmark using a ThreadPoolExecutor with four threads (measured using VisualVM; green means running, yellow means waiting). One thread carries the bulk of the workload on its own. This explains why the ThreadPoolExecutor performs somewhat better as the number of threads rises – the imbalance is spread across multiple threads.

![]() With the ThreadPoolExecutor, one thread performs most of the computation, while the others wait (figure 4).

With the ThreadPoolExecutor, one thread performs most of the computation, while the others wait (figure 4).

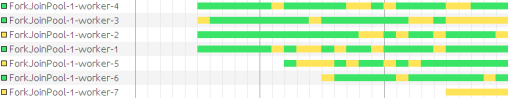

For comparison, figure 5 shows that, thanks to work stealing, the ForkJoinPool threads are much better utilised. It can be seen that additional worker threads are started when active threads are having to wait for the results of other tasks. Despite this, at any one time only four worker threads are actually running, with the remainder in the rest state.

![]() With the ForkJoinPool, work stealing and creating additional worker threads as required results in automatic load balancing (figure 5).

With the ForkJoinPool, work stealing and creating additional worker threads as required results in automatic load balancing (figure 5).

Overall, we can say that the ThreadPoolExecutor is to be preferred where the workload is evenly split across worker threads. To be able to guarantee this, you do need to know precisely what the input data looks like. By contrast, the ForkJoinPool provides good performance irrespective of the input data and is thus a significantly more robust solution.

![Kernel Log: Coming in 3.10 (Part 3) [--] Infrastructure](/imgs/43/1/0/4/2/6/7/2/comingin310_4_kicker-4977194bfb0de0d7.png)

![Kernel Log: Coming in 3.10 (Part 3) [--] Infrastructure](/imgs/43/1/0/4/2/3/2/3/comingin310_3_kicker-151cd7b9e9660f05.png)